Crypto AI company OpenServ is trying to sell two things at once: an AI infrastructure story and a crypto token story. Its claim that its new model, SERV Nano, can match or beat OpenAI on some tasks has made that pitch more interesting, but they have also raised the standard of proof.

The company describes itself as an end-to-end suite for building, launching, and operating autonomous startups, with product rails that span AI agents, workflow tooling, reasoning architecture, token launch mechanics, and on-chain monetization. That places it in a category that remains underbuilt.

Why this matters: EDX Markets’ bid for a federal trust bank charter is a live test of whether Wall Street-backed firms can move more of crypto’s custody and settlement stack inside the U.S. banking perimeter. It carries broader implications than a standard crypto expansion story.

A large share of the AI market still revolves around models, wrappers, and user interfaces, while a more difficult operational layer sits lower in the stack, where systems need bounded reasoning, cost discipline, auditable outputs, and enough structure to handle tasks that carry budget, execution risk, and real-world consequences.

Top AI Crypto Assets by Market Cap

The company’s branding around its launch on Base and Solana has raised a basic but important question. Is OpenServ a blockchain project, or is it an AI project with blockchain rails attached?

The available evidence points toward the latter. OpenServ’s own documentation presents the platform as an agentic infrastructure layer that supports AI-driven products and autonomous business workflows, while the crypto side handles token creation, launch mechanics, incentives, fee flows, and capitalization.

Its $SERV token documentation describes the asset as a native ecosystem token tied to usage, burn, and reward mechanisms across the platform. That framing points toward a crypto-native AI business, rather than a base-layer blockchain protocol.

OpenServ is not trying to compete with Base, Solana, or any other chain as a network. It is trying to sit above models and above chains, then own a layer where agents can be structured, deployed, and monetized.

In practice, that means the blockchain element serves distribution, launch, and economic coordination, while the core technical proposition sits inside the orchestration and reasoning layer. The market has started to reward projects that can present this as a full-stack system.

The risk is that multiple claims can be bundled into a single narrative premium before each layer has cleared its own evidentiary threshold.

Base, Solana, and the attempt to turn AI infrastructure into a crypto-native business model

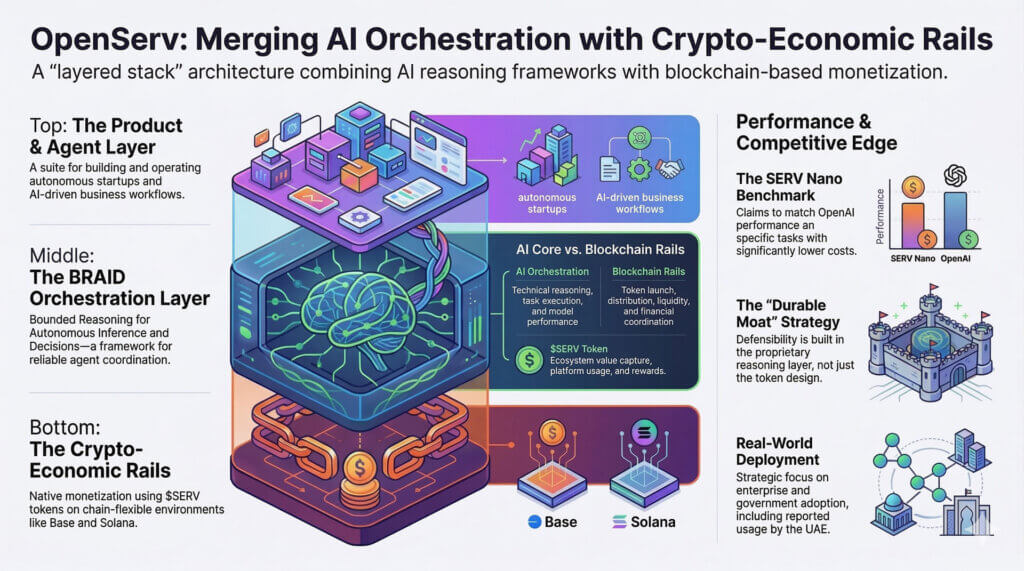

OpenServ’s architecture is easiest to understand as a layered stack. At the top sits the product narrative around autonomous startups, AI agents, and self-serve tooling. At the middle sits the orchestration claim, where OpenServ argues it has built a structured reasoning framework that can coordinate agent behavior more efficiently than generic prompt chains.

At the bottom sits the crypto monetization layer, where projects can launch tokens, create liquidity, and route platform value through an ecosystem asset. The company’s public materials repeatedly tie these pieces together.

Its website presents building, launching, and running as one continuous path, while the docs spell out token launch mechanics and ecosystem value capture in more detail.

That structure helps explain the use of Base and Solana. Base gives OpenServ an EVM-aligned environment for token launches and liquidity workflows, while Solana gives it access to a faster, lower-cost ecosystem that remains active in retail token experimentation and on-chain application design.

The use of both chains broadens the platform’s addressable market and gives OpenServ a way to present itself as chain-flexible rather than chain-dependent. For a company trying to sell AI tooling into a crypto-native audience, that design makes commercial sense.

It allows OpenServ to say its reasoning layer can drive autonomous systems, while the blockchain rails handle launch, ownership, incentives, and financial coordination.

A harder question sits underneath the packaging, around where the durable moat actually lives. A token launch framework can attract attention quickly, especially when it taps into the current market appetite for AI-linked assets. Distribution can move fast. Capital can move even faster.

Defensibility usually lives deeper in the stack. If OpenServ’s durable edge sits in orchestration, then Base and Solana function as useful deployment venues, while the real asset is the proprietary reasoning layer that claims to make AI agents cheaper, faster, and more reliable.

If the core edge sits instead in token design and chain-level packaging, then the platform looks closer to a crypto distribution machine wrapped around an AI narrative.

The blockchain assessment, therefore, needs to stay tied to the benchmarks. OpenServ’s crypto rails can explain how value moves through the ecosystem. They do not answer whether the system actually performs better than alternatives.

The market often compresses these issues into a strong team, a large market, early positioning, and an underpriced token. That framing can produce attention and liquidity.

It does not resolve whether the product has crossed the line from interesting architecture to independently validated infrastructure. The value of Base and Solana in this setup depends on what they are supporting.

If they are supporting a reasoning layer with measurable economic and operational gains, the blockchain component becomes part of a coherent stack. If they are supporting a narrative premium around benchmark snippets and selective adoption language, then the on-chain layer amplifies volatility more than it compounds product strength.

OpenServ’s own materials give enough evidence to establish one point clearly. This is a crypto-native AI platform that uses blockchain for launch, monetization, and ecosystem coordination.

That seems more precise than calling it a blockchain protocol, and more useful than reducing it to an AI wrapper with a token. The platform is trying to merge agent tooling with on-chain economic rails, then own the operational layer between models and monetized deployment.

That ambition is clear. The remaining work lies in proving that the middle of the stack is as strong as the outer packaging suggests.

OpenAI comparisons, SERV Nano, and the benchmark claims carrying the narrative load

The center of gravity in OpenServ’s current positioning sits in its benchmark language. The most forceful public claims center on the company’s reasoning framework and its SERV Nano offering, with executives and promoters arguing that the system can outperform or match OpenAI models on standard evaluations while running at a sharply lower cost and higher speed.

Those claims are designed to do two things at once. First, they signal that OpenServ is working on a real technical bottleneck inside agent systems. Second, they create a valuation bridge between infrastructure performance and token upside.

Once the market hears “matched GPT-5.4 at 20x lower cost and 3x the speed,” the burden of proof shifts to methodology, task selection, reproducibility, and evidence of deployment.

OpenServ has published material around its BRAID framework, short for Bounded Reasoning for Autonomous Inference and Decisions. The company says this layer improves performance-per-dollar and boosts reliability across bounded tasks by replacing loosely structured prompting with a more deterministic, machine-readable process.

The associated arXiv paper presents the framework in academic form and references internal benchmark logging. That gives OpenServ more technical surface area than a typical promo campaign. It also means the strongest claims can be tested against a higher standard.

The OpenAI comparison needs careful handling. OpenAI’s own documentation for GPT-5.4 nano frames the model as a low-cost, high-speed option for high-volume tasks.

That positioning already suggests the comparison is more nuanced than a simple frontier-versus-frontier showdown. When a third-party framework claims it can match or surpass an OpenAI model, the result can reflect several different sources of lift.

It can come from narrower task framing. It can come from routing logic. It can come from deterministic scaffolding. It can come from constraints that reduce output variance. It can come from cost accounting that measures system-level efficiency rather than raw model capability.

Each of those can be commercially meaningful. Each one also says something different about what has been achieved.

For OpenServ, the key question is what exactly is being compared. If SERV Nano is a model, then the company is making a single claim. If it is an orchestration layer or a structured wrapper that sits atop another model, then the claim takes a different shape.

If the result depends on bounded tasks with narrow decision trees, that can still be useful in enterprise settings where reliability and cost control carry more weight than a broad conversational range. If the result is being generalized into “beating every OpenAI model,” then the language moves faster than the information needed to evaluate it.

That distinction becomes even more important because the strongest market narratives often form around a cluster of adjacent claims. OpenServ’s public messaging combines benchmark wins, large speed and cost differentials, enterprise usage, government deployment language, and an under-$50 million valuation frame promoted by supporters.

At that point, the benchmark is doing more than technical work. It is underwriting a token thesis.

Public market data from CoinGecko currently places SERV in the small-cap range, with a mid-teens million market capitalization during the latest review, which keeps the asymmetry pitch alive for speculators. Yet token valuation and benchmark validity sit on different ladders.

A low market cap can create upside if the product is real. It can also create a fast-moving narrative pocket long before the product has been independently established.

Where the proof threshold now sits

None of this means the benchmark claims should be dismissed. A structured reasoning layer that delivers higher accuracy per dollar on bounded tasks would address a real pain point in enterprise AI.

Cost curves still matter. Latency still matters. Reliability under constraint still matters.

Enterprises do not need every workflow to resemble frontier research. Many need systems that execute repeatable tasks cheaply, quickly, and within defined boundaries.

That is exactly the environment where an orchestration layer can create value. It is also the environment where the proof standard should be the highest, because bounded systems can appear strong under curated conditions and then degrade when task complexity, ambiguity, or integration risk increases.

The next stage in evaluating OpenServ, therefore, sits in the evidence around configuration, task selection, reproducibility, and customer references. Which OpenAI models were compared, under which conditions? What does “matched” mean numerically and operationally? Were tools enabled? Were context windows aligned? Were tasks chosen from public benchmarks, private enterprise workflows, or internal composites? How much of the cost advantage came from model choice versus orchestration logic?

Those questions do not weaken the case. They define it. A serious infrastructure company should welcome that standard, because durable value in this category will accrue to platforms that can show their work and hold up under independent inspection.

The last layer in the OpenServ thesis sits beyond Base, Solana, and benchmark charts. It sits in proof. Public messaging around the platform has gone beyond model economics and into production credibility, with references to enterprise adoption and use by the UAE government.

Those claims, if fully substantiated, would materially strengthen the platform’s position. They would suggest that OpenServ has moved beyond a well-marketed architecture and into a narrower class of companies that can sell operational AI under real constraints.

That jump is large, and the evidence threshold should rise with it.

So far, public documentation gives partial visibility but not full verification. OpenServ’s own materials provide details on the framework, the token system, and the product architecture.

Press-release distribution and company-linked promotion reference enterprise usage and government-linked production environments. What remains difficult to establish through independent public sources is the exact identity of those deployments, the scope of usage, the distinction between paid production and pilot relationships, and the direct line between benchmark results and deployed business outcomes.

Those details will determine whether OpenServ belongs in the category of credible infrastructure companies or in the wider field of AI-crypto projects that can present an impressive stack faster than they can prove it.

The broader market context helps clarify this. AI infrastructure has moved into a phase where orchestration, control, auditability, and settlement are starting to carry as much strategic weight as model quality.

Recent reporting across crypto and AI has drawn more attention to verification, escrow, machine payments, and the coordination problems that arise when agents move beyond chat and begin transacting or acting under policy constraints. That backdrop gives OpenServ’s pitch more relevance than a generic AI-token narrative.

The company is pointing at a real bottleneck. Agents that carry budget, authority, and operational scope need a trustworthy execution layer. They need structure. They need bounded logic. They need enough determinism to make audit and accountability possible.

That backdrop also sets a more demanding test. Once a platform claims to sit in the trust layer of the agent stack, every supporting assertion takes on operational significance.

A government deployment claim should be nameable, scoped, and attributable. Enterprise relationships should be classifiable as pilots, design partnerships, paid contracts, or production systems. Benchmark papers should allow external readers to understand exactly what has been measured and where the lift originates.

A token should have a clearly legible role in value accrual rather than serving as ambient upside around a SaaS-style platform. Each of these is manageable. Together, they form the real threshold.

OpenServ deserves attention, but the correct frame remains disciplined. The company appears to be building an AI infrastructure platform with blockchain rails on Base and Solana, while using benchmark results against OpenAI-linked models to argue that its reasoning layer can deliver better economics on bounded tasks.

That package addresses a genuine market need. It also creates a promotional surface that can run ahead of independent confirmation.

The next phase will come down to named deployments, reproducible methodology, customer testimony, and evidence that gains from controlled benchmarks translate into messy operating environments.

For now, OpenServ looks less like a standalone blockchain protocol and more like a crypto-native AI infrastructure company.

Its blockchain rails help launch and monetize the platform. Its benchmark claims are carrying the heavier analytical load.

Its opportunity lies in proving that a structured reasoning layer can produce reliable gains in cost, speed, and operational trust. If that proof arrives, the platform will have a stronger foundation than many AI-token narratives currently trading on category heat.

If that proof remains diffuse, the market will still have learned something valuable about where attention is flowing in the next phase of agent infrastructure, toward the layer where models, execution, and monetization meet.